Testing is one of the fastest ways to improve conversion rate, click rate, repeat order rate, and revenue. But most brands don’t have a testing problem. They have a testing discipline problem.

They run experiments without defining success. They test multiple variables at once. They forget why they started the test in the first place.

If you want testing to drive meaningful growth, you need a simple, repeatable process. Here’s how to build one.

Step 1: Brainstorm without limits

Start with curiosity.

As a team, create a list of tests you want to run. At this stage, there are no bad ideas. Focus on the core metrics you’re trying to improve:

- Conversion rate (CVR)

- Click rate

- Repeat order rate

- Orders

- Revenue

- Average order value (AOV)

- Unsubscribe rate

The goal isn’t perfection. It’s volume. Capture everything that could move performance, from creative tweaks to flow timing to segmentation strategies.

Then move to discipline.

Step 2: Define success before you launch

Before you run a test, write down the single KPI that defines success.

This matters more than most marketers realize.

Tests often produce mixed results. For example:

- The variant has a higher click rate.

- The control generates more revenue.

If you didn’t define success upfront, your team debates after the fact. That slows decisions and weakens trust in testing.

Instead, decide in advance:

What metric determines the winner?

If revenue is the priority, optimize for revenue. If engagement is the goal, optimize for click rate. Clear alignment prevents confusion later.

Step 3: Prioritize for impact and feasibility

Once you have a list of potential tests, prioritize based on:

- Impact: How much could this move revenue or retention?

- Effort: How difficult is it to implement?

- Conflicts: Does it overlap with another active test?

Focus on tests with high impact and low effort first.

Also consider timing. Some tests should run during specific windows—like BFCM or a major product launch. If seasonality matters, document it and add it to your content calendar.

Testing isn’t random. It’s strategic.

Step 4: Isolate one variable

If you take one rule from this article, let it be this:

Test one thing at a time.

The most common way to invalidate a test is introducing multiple variables.

Variables can include:

- Email creative

- Subject line

- Preview text

- Send time

- Offer

- Audience segment

- Exclusions

- Seasonality

- Subscriber quality

If you change more than one of these at once, you won’t know what caused the performance shift.

Example: Testing send time

If you’re testing whether 9:00 a.m. performs better than 5:00 p.m., everything else must remain identical:

- Same audience

- Same exclusions

- Same email design

- Same offer

- Same subject line

The only difference should be send time.

Clean tests produce reliable insights. Messy tests produce guesses.

Step 5: Run the test

Launch the experiment and monitor performance.

Klaviyo offers built-in testing tools across campaigns, flows, sign-up forms, and more. Use them. They simplify split logic and measurement.

But don’t overreact to early data. Wait for statistical significance before calling a winner. Small performance swings in the first few hours often correct themselves.

Discipline beats impulse.

Step 6: Track results outside the platform

You can reference results in the Experiments tab, but we recommend maintaining a centralized spreadsheet.

Why?

Because context matters. In your tracking document, include:

- Hypothesis

- KPI used to define success

- Audience details

- Test duration

- Seasonality notes

- Final decision

- Key learnings

Too many brands start tests and forget why. Or a new team member joins and has no context for past decisions.

Documentation turns testing into institutional knowledge.

Step 7: Repeat before scaling

One result is not a universal truth.

A test that wins for Recent subscribers may not win for Loyal subscribers. A campaign insight may not apply to a welcome flow.

Repetition is part of the scientific process. Validate wins across:

- Different segments

- Different buying stages

- Different campaign types

Only then should you apply changes broadly.

Don’t shy away from bold tests

Small tweaks are useful. But big changes can create step-function growth.

If you’re concerned about risk—especially in high-revenue flows—limit exposure.

For example:

- Run the variant on 5–10% of the audience.

- Monitor revenue closely.

- Expand only if performance holds.

This approach protects revenue while allowing innovation.

Playing it safe forever is its own risk.

Common testing mistakes to avoid

Even experienced marketers fall into these traps.

1. Misunderstanding metrics

Different metrics tell different stories. Know what you’re optimizing for.

- Click rate: Indicates engagement and traffic to your site.

- Orders and revenue: Show monetary impact and segmentation effectiveness. Higher is generally better.

- AOV: A substantially higher AOV may indicate stronger long-term revenue potential. Review alongside total orders for a holistic view.

- Unsubscribe rate: Reflects content relevance, frequency, targeting, and timing.

Optimizing for clicks while ignoring revenue can mislead you. Always evaluate metrics in context.

2. Running unclean tests

Any A/B test should isolate one variable.

If you’re testing CTA placement, don’t also change:

- The design

- The discount

- The audience

- The subject line

When multiple variables shift, attribution breaks.

3. Choosing the wrong testing window

Testing too short:

- You risk acting on noise.

Testing too long:

- You delay improvements.

Large-scale strategic tests often require weeks or months unless results reach statistical significance quickly. Smaller tests can run over shorter windows.

No test should end without statistical significance.

4. Failing to document the “why”

Without documentation, tests lose meaning.

You need to know:

- Why the test started.

- What hypothesis you were validating.

- What business problem you were solving.

Otherwise, you’re just changing things.

5. Setting and forgetting flow tests

Flows generate consistent revenue. That’s why they require active management.

Set reminders to review flow tests weekly. Make sure:

- Tests are still relevant.

- Winning variants are implemented.

- Old experiments aren’t running indefinitely.

Testing should be intentional, not accidental.

High-impact tests you can start today

If you’re unsure where to begin, start here:

- Subject line and preview text

- CTA placement within email

- CTA design

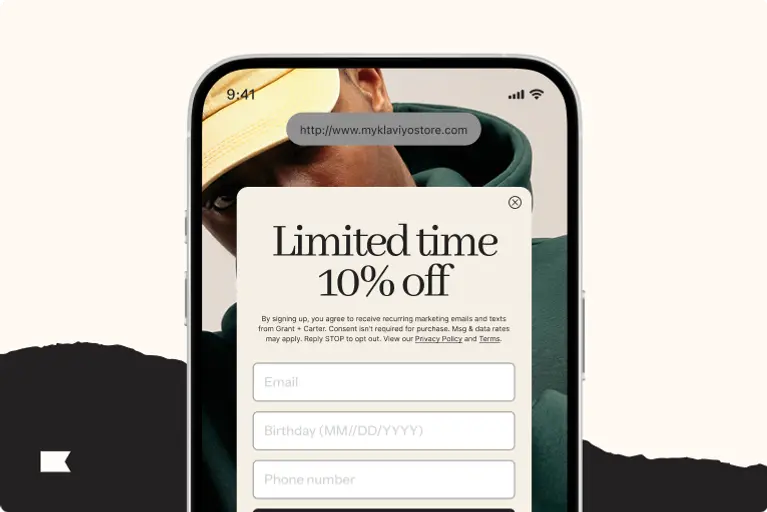

- Sign-up form imagery

- Sign-up form copy and prompts

- Teaser vs. no teaser bubble on sign-up forms

- Time delays within automated flows

- Email or text message marketing send time

- Offer type by customer segment

- Welcome flow content

- Welcome flow length and number of messages

- Dynamic product blocks in campaigns or flows

These tests require relatively low effort and can meaningfully impact engagement and revenue.

Build a culture, not just a test

Testing isn’t about running experiments occasionally. It’s about building a culture of curiosity and accountability.

Ask regularly:

- What are we trying to improve?

- What does success look like?

- What did we learn?

- What do we test next?

When testing becomes systematic, growth becomes predictable.

Clarifying follow-ups

To tailor this framework to your brand, consider:

- What is your primary KPI right now—revenue, retention, AOV, or engagement?

- How long do your average buying cycles last?

- Which flows generate the most revenue today?

- Do you currently track testing results in a centralized document?

- Are there seasonal windows (like BFCM) that affect your roadmap?

Answer these, and your testing strategy becomes sharper immediately.