For years, marketers have used A/B testing to optimize marketing assets.

In 2012, Bing performed a small A/B test on the way their search engine displayed ad headlines. It led to a 12% increase in revenue—more than $100M in the US alone.

But that was over a decade ago. Since then, getting attention online has become a lot more competitive. Noah Kagan, founder of web app deals website Appsumo, recently revealed that only 1 in 8 of their A/B tests produces significant results—meaning brands now have to perform these tests 1) at scale and 2) beyond email to keep iterating at a competitive rate.

This is where nailing A/B testing best practices can help.

A/B testing (or split testing) is a research methodology that involves testing variations of a marketing email, SMS message, landing page, or website form to determine which one yields higher open rates, click rates, conversion rates, and more.

Effective A/B testing, especially when paired with AI, can significantly boost your marketing results. But without a solid strategy, it can easily turn into a waste of time and money. For your A/B testing to deliver real results, you need to set clear and relevant criteria from the start.

Here are 12 best practices to follow to make sure your A/B tests lead to meaningful improvement in your brand’s marketing performance:

1. Develop a hypothesis

Like in any experiment, start with a hypothesis. It’s basically an educated guess about what will drive better results.

Your hypothesis determines which elements you’ll test. Keep it clear and simple. Here are a few examples of hypotheses that could work:

- The subject line of my abandoned cart email will drive higher open rates when it references the name of the product.

- An email that looks like it’s coming from a person rather than our company name will earn more opens.

- A text message will drive more clicks when it’s preceded by an email.

- A sign-up form will drive higher list growth if it appears after the shopper has scrolled at least 75% of the page.

Real-life A/B testing example: Sustainable clothing brand Brava Fabrics hypothesized that a sign-up form offering a contest entry with a one-time prize would perform just as well as a discount offer—while costing the company less. (Read on to see what happened.)

2. Decide how you’ll determine the winner

Before running an A/B test, you need to decide how you’ll determine the winner. To that end, choose a performance reporting metric based on the A/B test variable. For example:

- Open rate: if you’re testing “from” name, subject line, or preview text

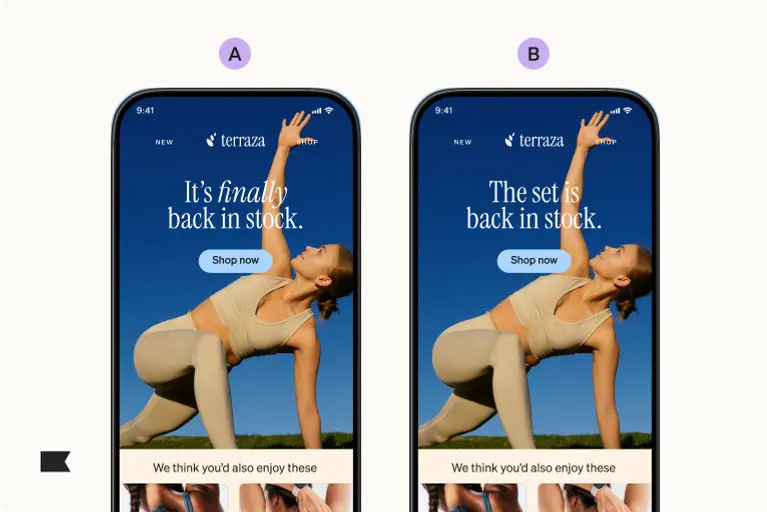

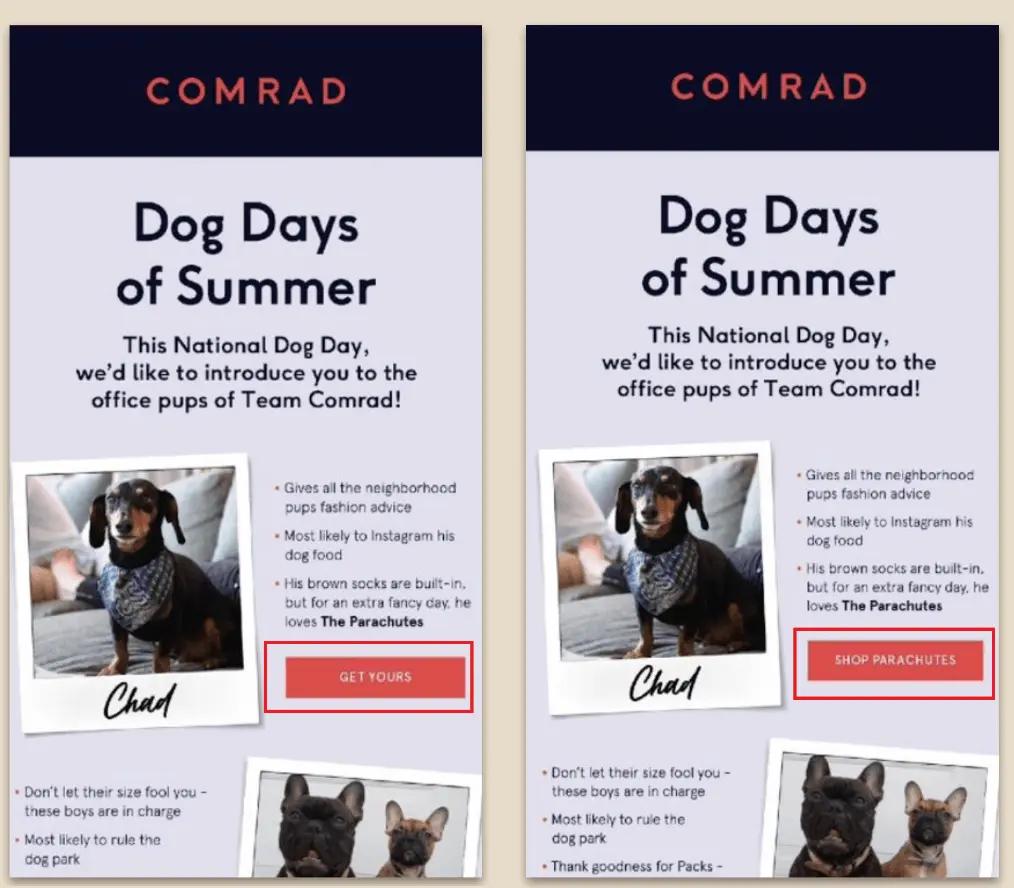

- Click rate: if you’re testing content like email layout, different visuals, CTA appearance, or CTA copy

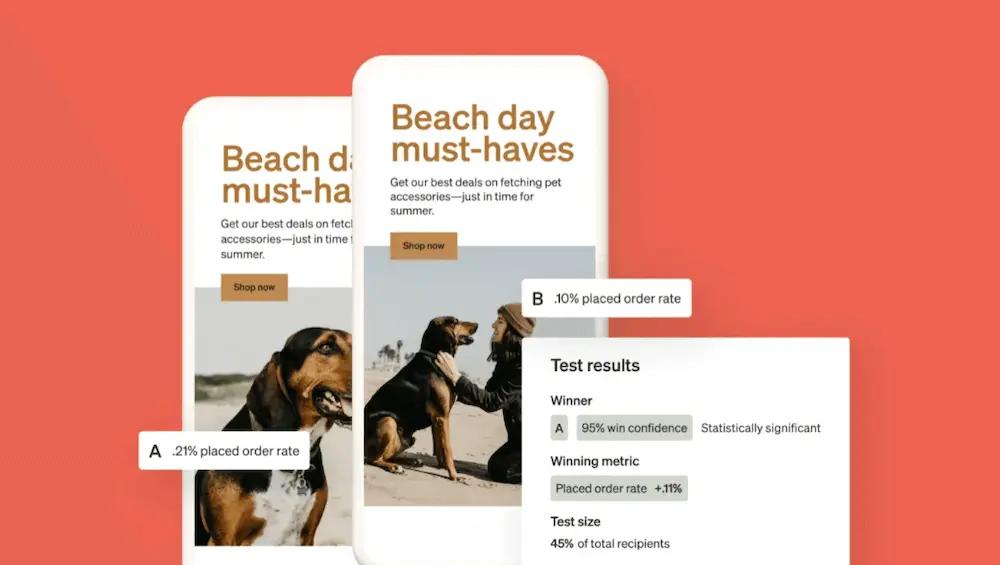

- Placed order rate: if you’re testing content like social proof, send time, or CTA placement

Real-life A/B testing example: Brava Fabrics decided to determine their A/B test winner based on sign-up form submission rate.

3. Test only one variable at a time

You probably have many ideas for A/B testing your emails, texts, and forms. But it’s crucial to test one variable at a time. Testing more than one variable simultaneously makes it impossible to attribute results.

Let’s say you edit both the color and the copy on your email CTA, and you notice a spike in click rates. You won’t know if the increased engagement was the result of the change in color or the change in copy.

Or let’s say you want to test whether including an image on your sign-up form increases submission rates. If you display the form without an image when visitors land, but you display the form with an image when visitors show exit intent, you won’t be able to tell if any changes in performance are the result of the change in visuals or the change in timing.

By testing one variable at a time, you can be sure you understand exactly what it is your audience is responding to.

Real-life A/B testing example: The single element Brava Fabrics tested was their offer.

4. Test no more than 4 variations at a time

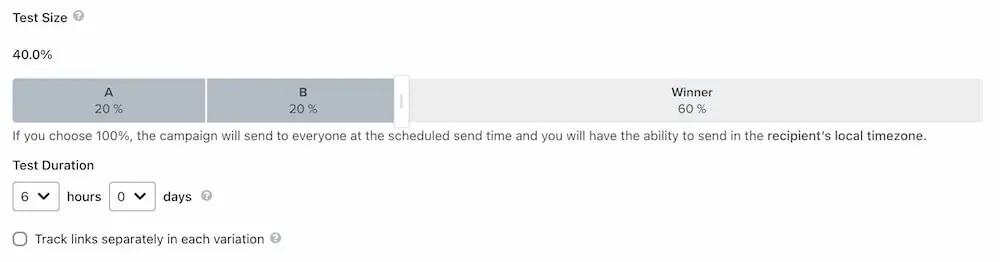

In the same vein, the more variations you add, the bigger the audience you’ll need to make sure you get reliable results (more on this next).

We recommend testing up to 4 variations of the same variable at a time—for example, the same CTA button in 4 different colors. If you’re concerned that your sample size isn’t large enough to accommodate that many variations, stick with 2–3.

Real-life A/B testing example: Brava Fabrics tested 3 variations of their offer:

- A 10% discount in exchange for an email sign-up

- Entry to a contest where one person would win €300 in exchange for an email sign-up

- Entry to a contest where one person would win €1,000 in exchange for an email sign-up

Speaking of sample size…

5. Use a large sample and achieve statistical significance

A/B testing is a statistical experiment where you derive insight from the response of a group of people. The larger the sample size of the study, the more accurate the results. Testing across too small a group can yield misleading results because the results might not represent the behavior of your entire audience.

Imagine you run an A/B test on a sign-up form. On the first day, 10 people see the form, and all of them click through and make a purchase. But over the next week, 2,000 more visitors come to your site—and almost all of them close the form without signing up. If you had ended the test after only one day, you’d think your form was a huge success, even though a larger sample showed otherwise.

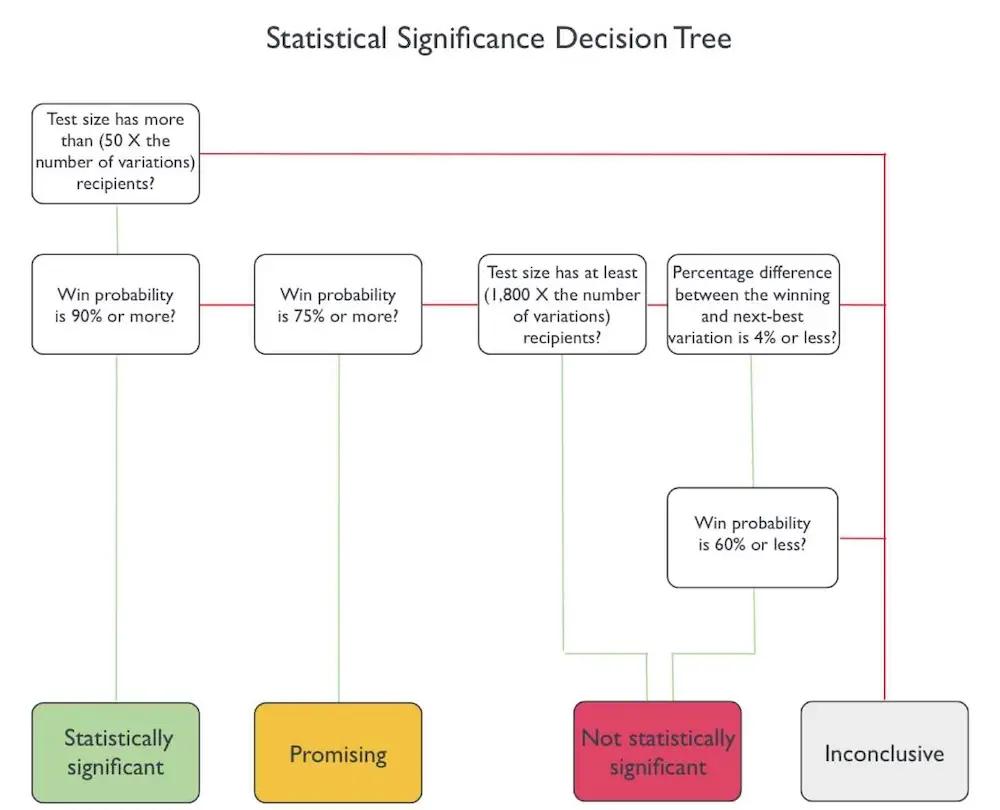

Similarly, your A/B test results aren’t conclusive until they’re statistically significant. Without statistical significance, small variations might be due to chance rather than a true difference in performance.

Klaviyo helps simplify this by organizing your test results into easy-to-understand categories:

- Statistically significant: A certain variation of your test is highly likely to win over the other option(s). You could reproduce the results and apply what you learned to your future sends.

- Promising: One variation looks like it’s performing better than the other(s), but the evidence isn’t strong enough based on one test. Consider running another.

- Not statistically significant: One variation beats the other(s) in the test, but only by only a slight amount. You may not be able to replicate the result in another test.

- Inconclusive: There’s not enough information to determine whether or not something is statistically significant. In that case, you may want to expand your recipient pool or follow up with more tests.

Always wait until your results are statistically significant or until you have a good sample size of viewers before ending your test. Klaviyo recommends setting your test size proportional to your list size or average website traffic, such as 20% of your list for the first version of your email and 20% for the second.

6. Test low-effort elements first

When you’re first starting out, choose high-impact, low-effort elements that take less time to test. Here’s where to start:

- Subject line or headline

- CTA button copy

- The use of emojis

Once you test your low-effort elements and interpret the data, move on to high-effort ones like:

- Format

- Layout

- Visuals

7. Prioritize the messages you send the most

During your A/B testing, focus on the emails and texts you send the most, especially the ones that generate sales or keep customers coming back.

These could include:

- Abandoned cart reminders

- Promotional campaigns

- Welcome emails

You can also look at your historical list and segment sizes and prioritize the marketing messages that are seen by the largest number of people. Focusing on these communications ensures you reach many recipients and get a better return on investment (ROI) on your A/B testing efforts.

8. Don’t edit a live test

Once you’ve launched your A/B test, it’s important to let it run its course without making changes to the test that could confuse the results.

Klaviyo doesn’t allow users to modify a live A/B test for forms or email and/or SMS campaigns. If you need to make changes to a campaign, cancel the email send and then re-start your test to ensure you’re getting the most accurate data.

Klaviyo does, however, allow you to modify a live A/B test for email and/or SMS flows—but you shouldn’t. Instead, if you need to make changes to an automation, end your A/B test first.

9. Wait enough time before evaluating performance

People tend to read text messages right away. But even if you’ve sent an email to a large group of people, they might not engage with it immediately. Some subscribers might open your email right after it lands in their inbox, while others might not get around to it for a day or two.

If you’re testing an abandoned cart email, give it enough time—preferably 3–5 days—before checking how many people completed their purchase. On the other hand, when testing open rates or click rates on a flash sale text, you might get results faster.

Klaviyo recommends setting a test duration based on the A/B test metric you’re evaluating—for example, if you’re using placed orders as your success metric for an email A/B test, your test duration will probably be longer than if you’re evaluating based on opens or clicks.

Real-life A/B testing example: After running their A/B test, Brava Fabrics determined that their offers performed identically. Notably, increasing the prize amount didn’t impact conversion rate. Giving only one customer a prize, rather than offering everyone the same discount, helped the brand protect their marketing budget.

10. Ask for feedback

If you’re planning on making a big shift in your marketing, A/B testing can be a great place to see if your audience responds well to new ideas.

Experimentation provides valuable data, but getting direct feedback from your customers can reveal insights that numbers alone cannot—which makes asking for feedback a useful strategy to use in tandem with your A/B test experiments.

One easy way to communicate with subscribers who were recently part of an A/B test is to tag those who interacted with your CTA. Then, you can follow up with a personalized email asking for their feedback. You might write something like, “Hey Sarah, we’re always looking to improve! What did you think of our latest email? Any suggestions to make it even better?”

By focusing only on those who engaged with your messages, you’re more likely to receive detailed and actionable responses that you might not get from analytics alone. This not only helps you refine future campaigns, but also strengthens your connection with your audience by showing you value their opinion.

11. A/B test for individuals, not majorities

A one-size-fits-all approach to A/B testing can be incredibly effective, but remember: it’s based on majority rule. True 1:1 personalization requires taking A/B testing a step further.

With Klaviyo AI’s personalized campaigns feature, instead of sending the same default A/B test winner to everyone, you can tailor your sends to each individual recipient’s behavior and preferences.

When you select this option as your test strategy, Klaviyo uses AI to search for patterns amongst the test recipients who interact with each variation. After the testing period is over, Klaviyo predicts which variation will perform better for each recipient—and sends the rest of the recipients their preferred variation.

Let’s say you’re testing two email versions, and recipients with a CLV over 100 tend to open variation A more often, while those with a CLV under 100 prefer variation B. In that case, Klaviyo AI will send each person the version they’re more likely to engage with.

(This, of course, is a simplified example. Klaviyo considers a range of data points, including things like historical engagement rates and location, to make these decisions.)

12. Lean on AI to make your A/B testing smarter

There’s more where that came from.

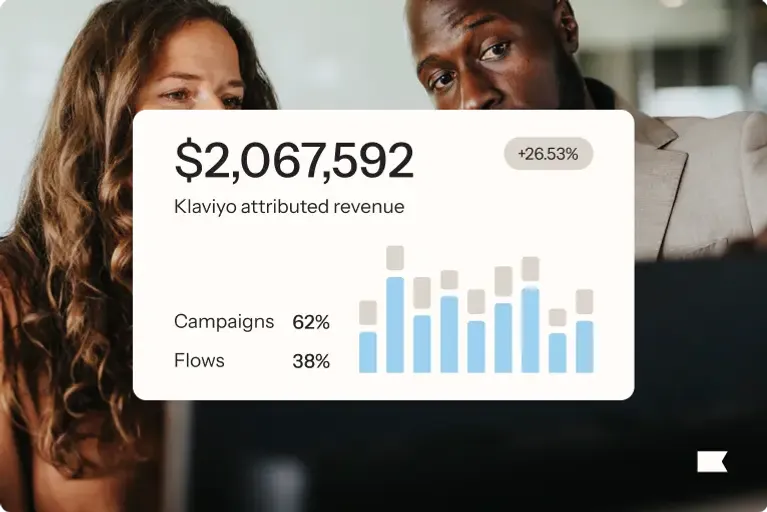

With Klaviyo, AI-powered A/B testing not only helps you direct your audience to the best-performing content—it also calculates the statistical significance of your test results and provides you with win percentage estimates.

And, it sends the winning version to your subscribers automatically.

Whether you want to put sign-up form optimization on autopilot or dial in your SMS and email send times, learn more about 5 actionable ways to make smart A/B testing work for you.

Power smarter digital relationships with Klaviyo SMS.

Get started